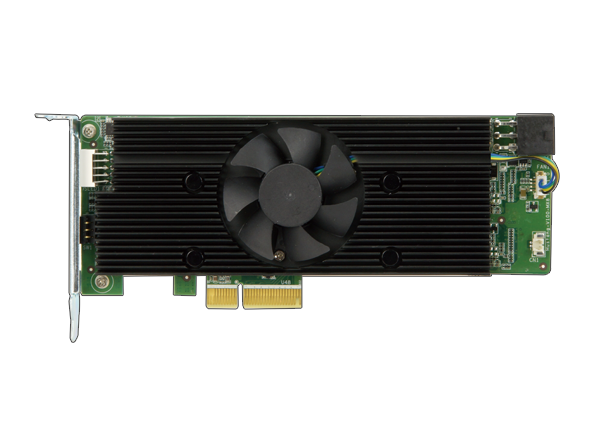

- Half-Height, Half-Length, Single-Slot Compact Size

- Low Power Consumption; Approx. 2.5W per Intel® Movidius™ Myriad™ X VPU

- Supported Open Visual Inference & Neural Network Optimisation (OpenVINO™) Toolkit, AI Edge Computing Ready Device

- 8x Intel® Movidius™ Myriad™ X VPUs to Execute Multiple Topologies Simultaneously

The Mustang-V100-MX8 is designed to handle artificial intelligence deep learning inference while still retaining power efficiency and a compact form factor. The card is half-height, half-length, and single-slot, resulting in a small-scale, condensed size perfect for space-conscious applications that require AI acceleration and machine vision.

As a VPU PCIe it can run artificial intelligence programs faster, and is well-suited for low power consumption applications such as surveillance, retail, and transportation. With the advantage of both power efficiency and high performance to dedicate to DNN topologies, it implements perfectly in AI edge computing devices to reduce total power usage and provide longer duty time for rechargeable edge computing equipment.

Finally, it supports Intel’s OpenVINO™ toolkit for the optimisation of pre-trained deep-learning models such as Caffe, MXNET, and Tensorflow; after this optimisation it can execute the inference engine across all kinds of Intel® hardware. As it features eight Intel® Movidius™ Myriad™ X VPUs, the Mustang-V100-MX8 is capable of executing multiple topologies at once.